AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

Back to Blog

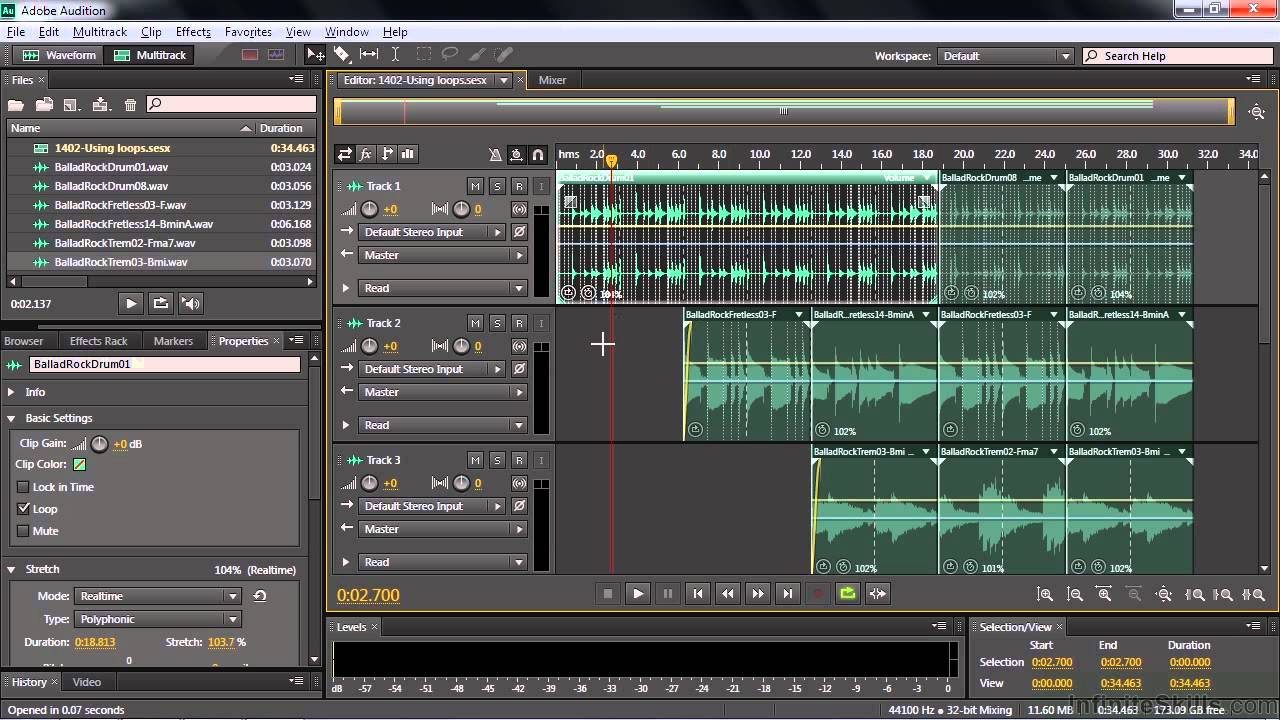

Adobe audition voice over tutorial9/15/2023

Any issues or consequences arising from their use are the sole responsibility of the user. Therefore, any AI models and synthesized audio produced through the training of this project are unrelated to the contributors. The contributors do not and cannot be aware of the purposes for which users utilize the project. The contributors have never provided any form of assistance to any organization or individual, including but not limited to dataset extraction, dataset processing, computing support, training support, inference, and so on. This project is an open-source, offline endeavor, and all members of SvcDevelopTeam, as well as other developers and maintainers involved (hereinafter referred to as contributors), have no control over the project. The developers' intention was to focus solely on fictional characters and avoid any involvement of real individuals, anything related to real individuals deviates from the developer's original intention. The purpose of this project was to enable developers to have their beloved anime characters perform singing tasks. It's important to note that the models used in these two projects are not interchangeable or universally applicable. In this project, TTS functionality is not supported, and VITS is incapable of performing SVC tasks. This project differs fundamentally from VITS, as it focuses on Singing Voice Conversion (SVC) rather than Text-to-Speech (TTS). 《民法典》 第一千零一十九条 第一千零二十四条 第一千零二十七条 《中华人民共和国宪法》 《中华人民共和国刑法》 《中华人民共和国民法典》 《中华人民共和国合同法》 □ Thanks to all contributors for their efforts README.mdĮnglish | 中文简体 ✨ A studio that contains visible f0 editor, speaker mix timeline editor and other features (Where the Onnx models are used) : MoeVoiceStudio ✨ A fork with a greatly improved user interface: 34j/so-vits-svc-fork ✨ A client supports real-time conversion: w-okada/voice-changer

Generate hubert and f0 □️♀️ Training Sovits Model Diffusion Model (optional) □ Inference Attention □ Optional Settings Automatic f0 prediction Cluster-based timbre leakage control Feature retrieval sovits4_for_colab.ipynb □️ Model compression □□ Timbre mixing Static Tone Mixing Dynamic timbre mixing □ Exporting to Onnx □ Reference ☀️ Previous contributors □ Some legal provisions for reference Any country, region, organization, or individual using this project must comply with the following laws.

You can modify some parameters in the generated config.json and diffusion.yaml diffusion.yaml List of Vocoders 3. Automatically split the dataset into training and validation sets, and generate configuration files. Resample to 44100Hz and mono Attention 2. If OnnxHubert/ContentVec as the encoder List of Encoders Optional(Strongly recommend) Optional(Select as Required) □ Dataset Preparation □️ Preprocessing 0. If hubertsoft is used as the speech encoder 3. If using contentvec as speech encoder(recommended) 2. □ Model Introduction □ 4.1-Stable Version Update Content □ Questions about compatibility with the 4.0 model □ Shallow diffusion □ Python Version □ Pre-trained Model Files Required 1. The repository and its maintainer, svc develop team, disclaim any association with or liability for the consequences. You bear full responsibility for any problems arising from the usage of non-authorized datasets for training, as well as any resulting consequences.

SoftVC VITS Singing Voice Conversion ✨ A studio that contains visible f0 editor, speaker mix timeline editor and other features (Where the Onnx models are used) : MoeVoiceStudio ✨ A fork with a greatly improved user interface: 34j/so-vits-svc-fork ✨ A client supports real-time conversion: w-okada/voice-changer Announcement Disclaimer □ Terms of Use Warning: Please ensure that you address any authorization issues related to the dataset on your own.

0 Comments

Read More

Leave a Reply. |

RSS Feed

RSS Feed